A Unique Glimpse Inside ChannelSight's Global Retail Data Engine

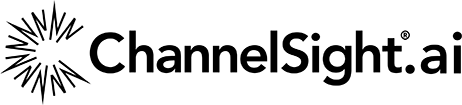

How 5,000 agents transform billions of retailer website signals into trusted commerce intelligence

One of the hardest challenges in ecommerce is turning the chaos of retailer websites into data that brands can trust and base decisions on.

A product page might look simple to a shopper, but underneath it sits a constantly changing mix of prices, stock messages, reviews, search positions, product identifiers, regional variationsand purchase paths. One retailer may expose clean structured data, another may render key information dynamically, and another may move reviews, availability or pricing into separate systems entirely.

For brands, that creates a critical question: how do you understand what is really happening at the point of purchase, across markets, retailers and channels, every day?

ChannelSight has spent more than a decade building the infrastructure to answer that question.

Every year, our data acquisition infrastructure makes over a billion web requests and extracts over 3 billion product records from retailer websites around the world. This data powers our Where-to-Buy technology, digital shelf analytics, price intelligence, availability monitoring, search placement tracking, review monitoring and sales performance insights.

This is not just scraping at scale, it’s a retail data infrastructure layer that turns fragmented, constantly changing data into structured commerce signals that brands can use to understand and improve their ecommerce performance. performance.

Turning retail complexity into usable intelligence

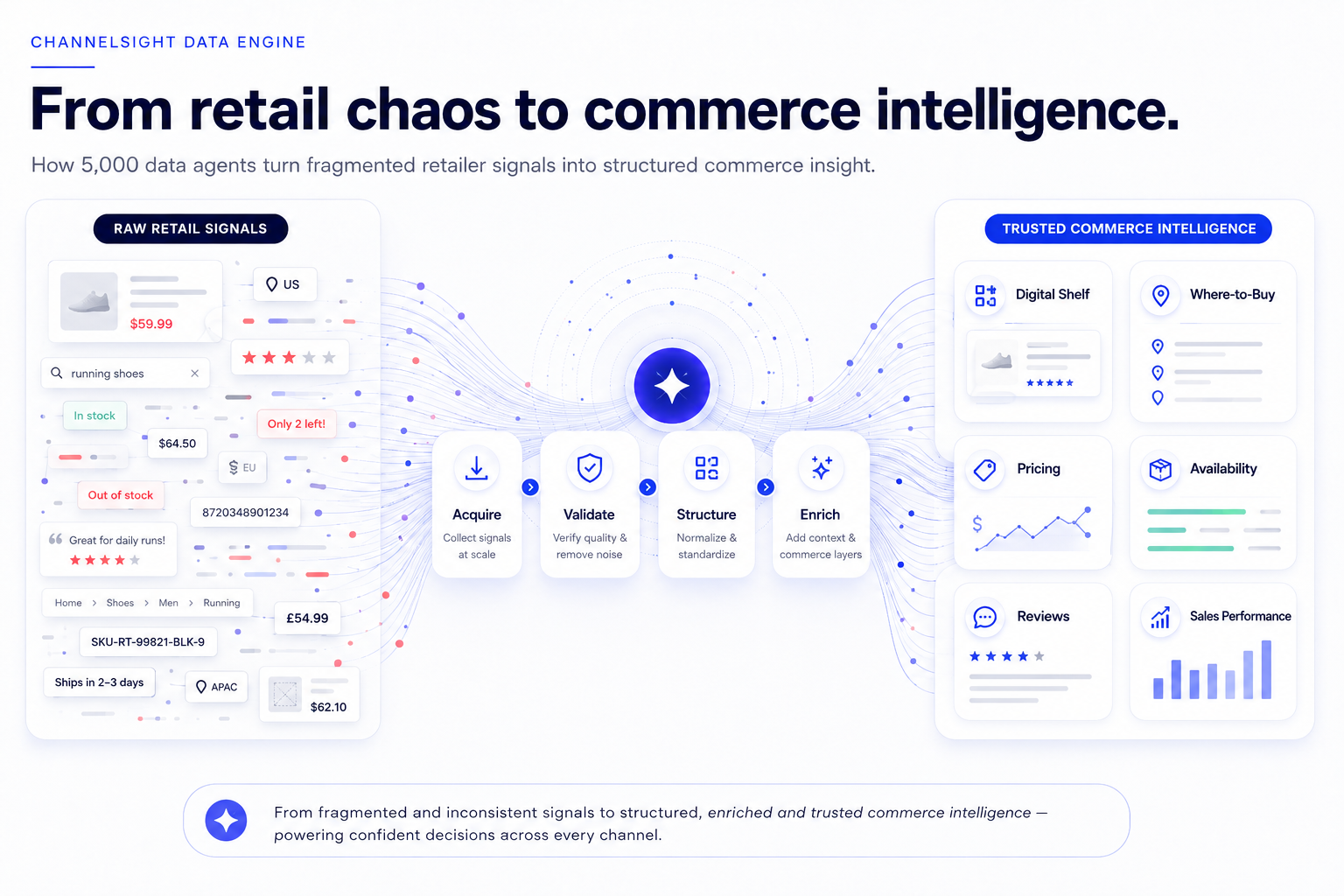

ChannelSight’s view of the digital shelf is different because it connects what brands can see on retailer sites with what shoppers actually do next.

Our platform tracks availability, pricing, content quality, search visibility, reviews and purchase pathways across the digital shelf. We then connect those signals with click traffic and sales performance from our Where-to-Buy technology, helping brands to understand not just whether a product is available online, but whether it is findable, competitive and easy to buy by humans, and LLMs or agents.

That broader view matters because ecommerce problems rarely show up neatly in one place. A product might be listed on a retailer site but unavailable in key regions, it might be in stock but priced poorly against competitors. It might have weak content, missing identifiers, low search visibility, broken retailer links or a purchase path that fails just as the shopper is ready to buy.

Any one of those issues will cost a brand sales. Worse still is that they are often invisible in aggregated reporting, where the symptom shows up as weaker performance, but the cause remainshidden.

ChannelSight’s infrastructure is designed to make those issues visible quickly enough for teams to act. The data acquisition layer is part of a broader data ecosystem that also includes Where-to-Buy engagement, retailer and partner integrations, affiliate-derived signals and sales performance data.

That connection, from retail signal to shopper action to commercial outcome, is what makes the underlying data acquisition capability so important. It’s not a back-office utility - it’s one of the foundations on which the ChannelSight platform is built.

A decade of retail data engineering

ChannelSight has been extracting and structuring data from retailer websites for more than ten years, and the way we do it has changed significantly over that time.

In the early days, our acquisition technology relied heavily on browser automation. It was a reasonable starting point: load the page, let the browser render it, then extract what we need. The problem was that this treated every page as if it required the heaviest possible approach, even when the important data was already available in the initial HTML or through accessible data endpoints.

That created unnecessary cost and complexity. Full browser sessions are slower, more resource-intensive and harder to run predictably at very high volumes. They still have an important role, but using them for everything was never going to be the most efficient way to operate globally.

In 2019 we began rebuilding the architecture around a more selective model. The principle was simple: use the lightest reliable method for each retailer, page type and use case.

Today, fast HTTP-based data agents handle the majority of our workload, while headless browsers are reserved for sites where the data genuinely requires dynamic rendering or more advanced technical handling. That shift reduced platform costs by approximately 85%, while also improving throughput and reliability.

The difference is not just financial. Lightweight acquisition is faster, easier to monitor and less resource-intensive for everyone involved, including retailer infrastructure. When you are operating thousands of data agents across global markets, those small architectural choices compound quickly.

This is not only a cost advantage. Lightweight acquisition is faster, more predictable and easier to monitor. It also helps minimise unnecessary load on retailer infrastructure by avoiding full browser sessions where they are not required.

At our scale, responsible data acquisition is not an afterthought – it’s an engineering requirement.

Why this is hard to replicate

Building one scraper for a website is relatively straightforward.

Keeping thousands of retailer-specific data agents working across retailers, markets and product categories is a very different challenge.

Retailer websites change constantly. Product identifiers are inconsistent, availability signals vary by geography and prices can be rendered dynamically. Search results shift. review structures differ, APIs appear, change or disappear. A page can look correct to a human while silently returning corrupted data to an automated system.

The challenge isn’t the first scraper or agent - it’s keeping the thousandth working. And then keeping the other 999 working while retailers continue to change their sites. And then adding another thousand. And another... and another... and another.

This is where experience really plays a part in our success.

Over time, ChannelSight has built up retailer-specific knowledge, market-specific patterns, known failure modes, validation rules, monitoring thresholds and repair workflows. That accumulated knowledge is part of the asset. It’s not visible in a single product screen, but it is embedded in the infrastructure that keeps the platform running.

What makes this valuable is not any single scraper, dashboard or workflow, it’s the accumulated system: coverage, data history, retailer-specific knowledge, quality controls, repair automation and the connection between digital shelf signals and purchase outcomes.

That’s the capability ChannelSight has built over more than a decade.

Keeping 5,000 agents working reliably

With more than 5,000 automated data agents in production, change is constant.

A redesigned product page, updated CSS selector, changed API response, new session requirement or altered pricing format can break a data agent that worked the day before. At this scale, breakages are not exceptions, they’re part of the operating environment.

The question isn’t whether data agents will break, but how quickly you can detect the issue, understand what changed and repair it without letting bad data flow into the platform. ChannelSightuses a multi-layered monitoring system that catches issues at different stages:

At this scale, the most dangerous problems are not always the obvious ones.

An agent returning no products is easy to spot. An agent returning the wrong price field, confusing a multipack with a single unit, or otherwise returning plausible but incorrect data is harder to catch and potentially more damaging,

That is why our systems monitor not just uptime, but data quality, completeness and consistency. The goal is to prevent bad data from reaching analytics, recommendations or client reporting.

Cost efficiency matters. Data quality matters more.

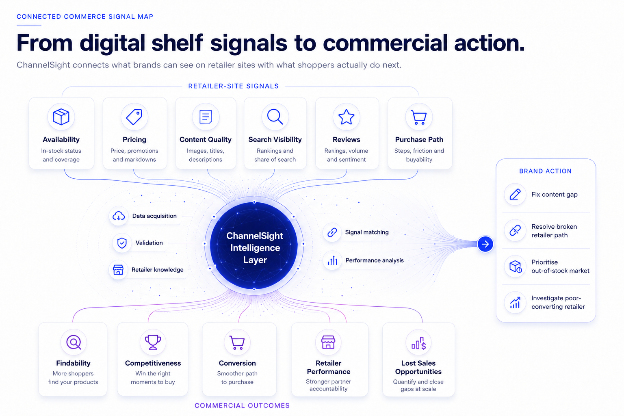

AI-assisted repair and creation

Last year we faced a scaling challenge.

Even with a dedicated team of developers repairing broken data agents, the backlog was growing faster than we wanted. Adding more people would have helped, but it wouldn’t have solved the underlying scaling issue.

Instead, we built an internal AI-assisted repair and creation pipeline that encodes our team's decade of retail data acquisition expertise into a repeatable system.

The system starts by pulling the data agent configuration, recent run logs and dataset samples. It then classifies the issue into one of 15 categories, from simple HTML or CSS changes to more complex access, rendering or data structure issues.

From there, it probes the target website, tests different acquisition strategies, identifies the most appropriate technical approach, and generates a complete, self-contained technical brief for an AI assistant. That brief includes the broken code, live page structure, validation errors, relevant data patterns and expected output schema.

The repaired or newly generated code is then tested locally against sample URLs in an environment that mirrors production. Schema validation enforces data quality before anything is deployed. Once verified, the system can push the data agent to production with backup, deployment checks and ticket updates.

AI helps us move faster, but it is not the moat by itself.

The advantage is the operating system around it: the known failure patterns, the retailer probes, the validation rules, the monitoring thresholds and the deployment safeguards. AI is operatinginside a system built from years of domain expertise.

The same pipeline now supports new data agent creation, from product discovery and site analysis through to deployment and quality verification. This accelerates our ability to onboard new retailers, expand market coverage and respond quickly to client needs.

The data flywheel

The real value is not only in acquiring product data but in connecting that data to commercial outcomes.

ChannelSight combines retailer-level digital shelf signals, including price, availability, content quality, search placement, reviews and product discoverability, with Where-to-Buy traffic and sales performance data.

That creates a more complete view of ecommerce performance: what shoppers can find, what they can buy, where they drop off, which retailers convert and where brands are losing sales.

Over time, this creates a powerful commerce data flywheel.

Better coverage improves visibility. Better visibility improves recommendations. Better recommendations improve performance. And every retailer, product, market and transaction signal makes the system more intelligent.

This is where the infrastructure becomes more than an operational capability. It becomes a strategic data asset.

Behind these metrics is an infrastructure team that treats retail data acquisition not as a commodity but as a core engineering discipline.

Why this matters now

Commerce is becoming more fragmented, more automated and more machine-mediated.

Shoppers are no longer moving through a simple journey from search engine to retailer site to checkout. They are discovering products through marketplaces, retail media, social platforms, recommendation engines and, increasingly, AI assistants that can compare options, interpret product information and influence where the purchase happens.

That shift changes what brands need to understand.

It is no longer enough to know whether a product is listed online. Brands need to know whether it is discoverable, correctly understood, competitively positioned, available in the right places and connected to a purchase path that works.

The same principle now applies to AI discovery. If product information is incomplete, inaccessible or difficult for machines to interpret, the brand may not appear in the recommendation set at all. If availability, pricing or purchase paths are unclear, even a recommended product may fail to convert.

That is why ChannelSight’s Agent Ready work builds naturally on the same foundation as our digital shelf and Where-to-Buy infrastructure. For more than a decade, we have been building the systems, datasets and operational knowledge required to make the retail web measurable. Now that same foundation can help brands understand how machines see, interpret and act on commerce data.

The future of ecommerce will not be won only by the brands with the best products or the biggest media budgets. It will be won by brands that are visible, understandable and purchasable wherever decisions are made.

ChannelSight is built to help them be exactly that.